Paper Reading: Robot learning 2

I want to stand with them.

RL-BASED DRONE PAPER READING

Planar Pose Graph#

A stability framework for planar pose optimization

A stability framework for planar pose optimization. By decoupling the problem into two subproblems and analyzing them, it converts the original matrix-form problem into a linear one (simpler than the matrix formulation), and finally optimizes the target by solving the linear problem.

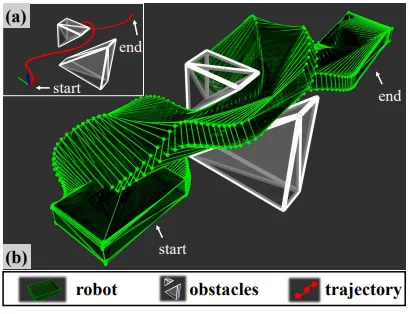

Whole-Body Scale Optimization#

Accurate whole-body collision trajectory prediction with linear scaling

It proposes an accurate whole-body collision formulation with linear scaling, and derives analytic gradients for trajectory optimization.

It proposes an accurate whole-body collision formulation with linear scaling, and derives analytic gradients for trajectory optimization.

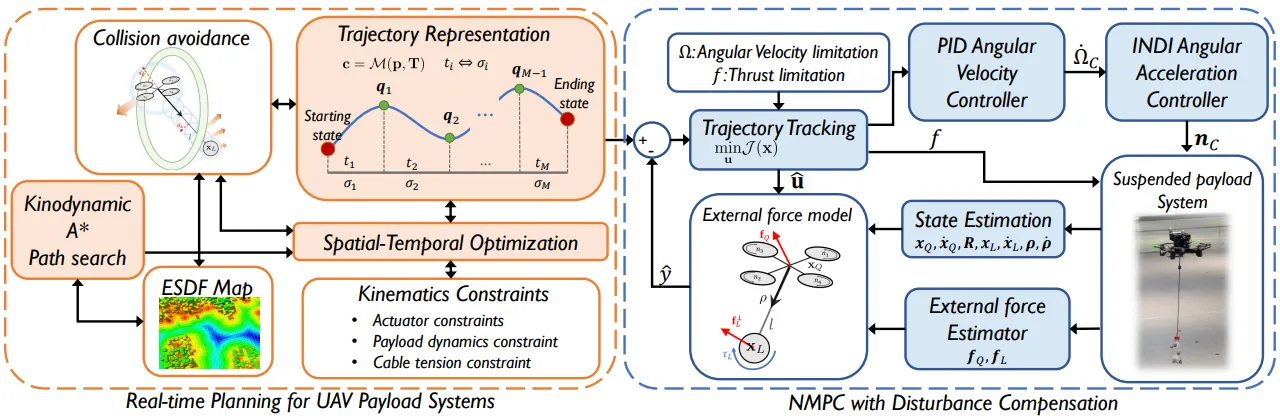

UAV Payload Transportation#

Planning and control for complex structures

Control of the UAV attitude and the payload attitude during heavy-load transportation, enabling automatic swing suppression.

Control of the UAV attitude and the payload attitude during heavy-load transportation, enabling automatic swing suppression.

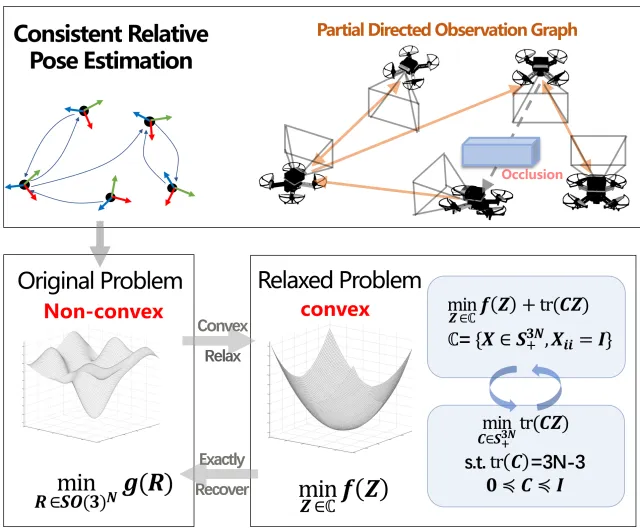

Robotic Relative Localization#

Swarm localization

Relative localization for a robot swarm with mutual observations. It addresses recovering relative poses for partially mutually observed robot groups and proposes a robust, scalable algorithm.

Relative localization for a robot swarm with mutual observations. It addresses recovering relative poses for partially mutually observed robot groups and proposes a robust, scalable algorithm.

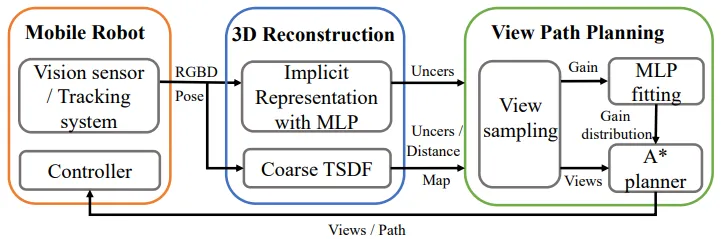

Path Planning#

Path planning

Topic: improve view-path planning efficiency for autonomous implicit reconstruction and enhance reconstruction quality of target images. Methods: (1) approximate information-gain fields (2) hybrid representations (3) a new path-planning strategy.

Topic: improve view-path planning efficiency for autonomous implicit reconstruction and enhance reconstruction quality of target images. Methods: (1) approximate information-gain fields (2) hybrid representations (3) a new path-planning strategy.

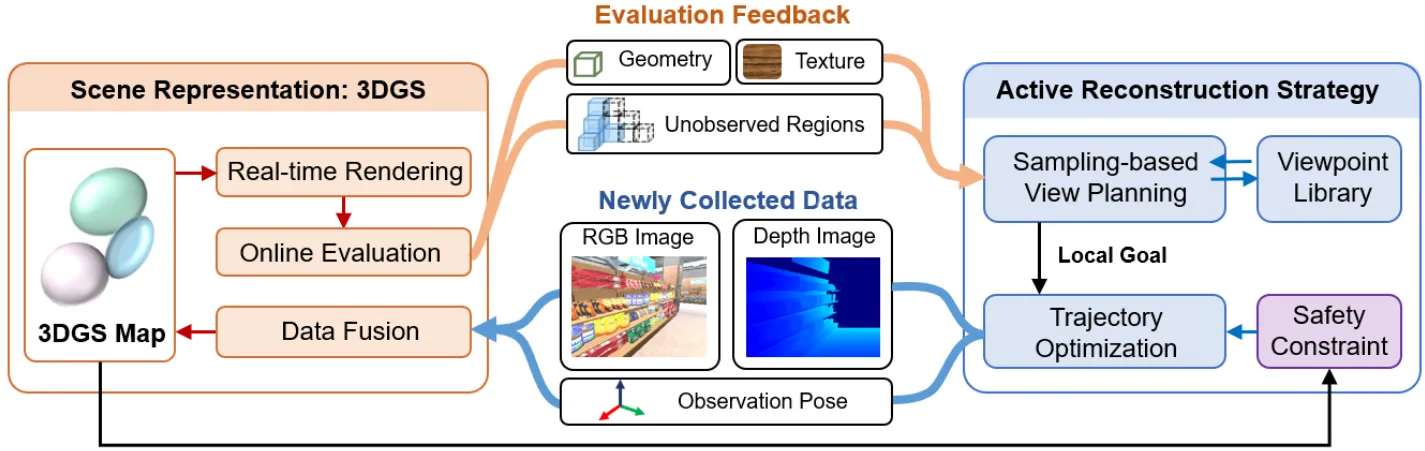

GS-Planner#

Gaussian-Splatting-based Planning Framework

3D Gaussian reconstruction (mapping + path planning, with emphasis on mapping): it proposes complete and mapping-quality evaluation metrics for 3D Gaussian mapping, and designs a sampling-based active view-planning algorithm to guide reconstruction of unobserved regions and improve mapping quality.

3D Gaussian reconstruction (mapping + path planning, with emphasis on mapping): it proposes complete and mapping-quality evaluation metrics for 3D Gaussian mapping, and designs a sampling-based active view-planning algorithm to guide reconstruction of unobserved regions and improve mapping quality.

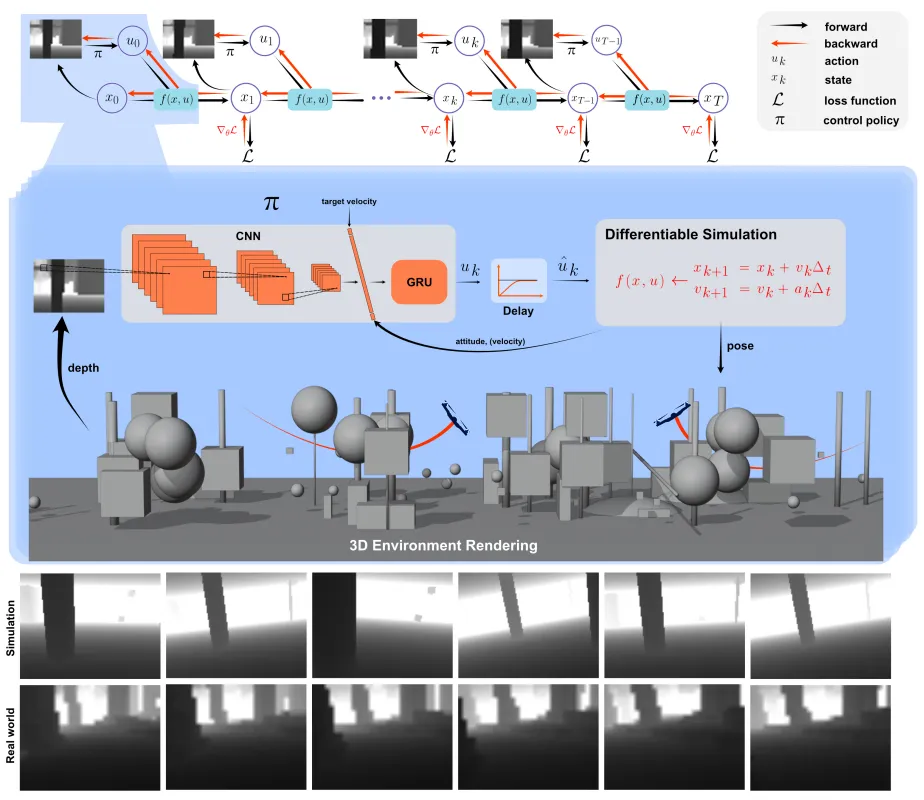

Back to Newton’s Laws#

Vision-based optimal control

This is not a paper in the reinforcement learning scope. As its title suggests, it goes back to Newton’s laws and uses physical approaches to achieve high-speed UAV flight, still based on vision algorithms.

This is not a paper in the reinforcement learning scope. As its title suggests, it goes back to Newton’s laws and uses physical approaches to achieve high-speed UAV flight, still based on vision algorithms.

ARiADNE#

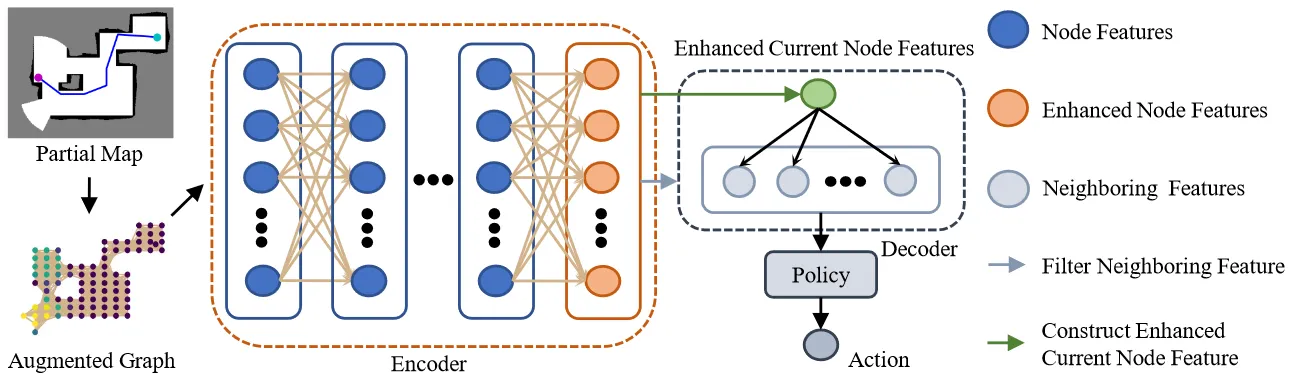

Attention-based reinforcement learning method

ARiADNE is an attention-based RL method for autonomous exploration in unknown robot environments, addressing short-sighted planning. Key contribution: use an attention network to learn multi-scale spatial dependencies in maps, combined with SAC to achieve non-myopic path decisions. It outperforms frontier-based methods, sampling-based methods, and CNN-DRL baselines in exploration efficiency, and is validated for practicality in ROS simulation.

ARiADNE is an attention-based RL method for autonomous exploration in unknown robot environments, addressing short-sighted planning. Key contribution: use an attention network to learn multi-scale spatial dependencies in maps, combined with SAC to achieve non-myopic path decisions. It outperforms frontier-based methods, sampling-based methods, and CNN-DRL baselines in exploration efficiency, and is validated for practicality in ROS simulation.

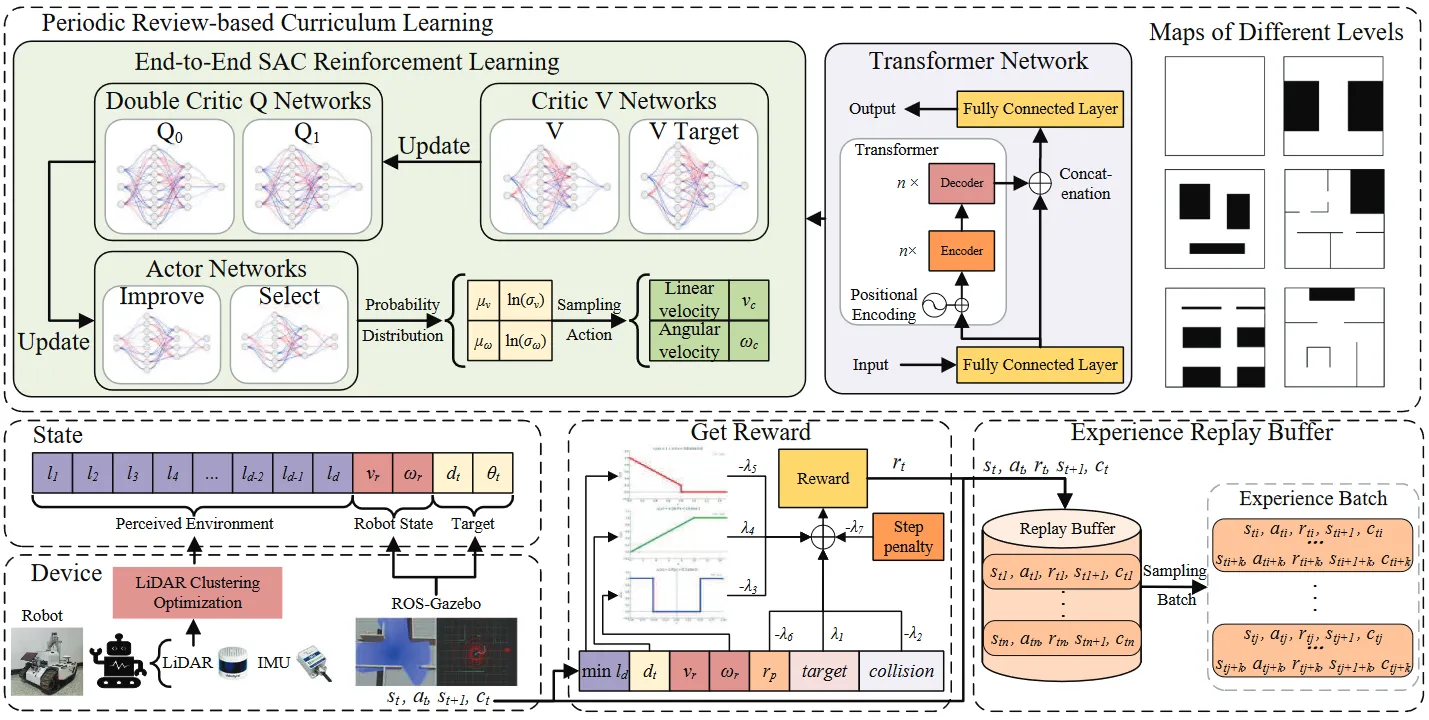

CTSAC#

Transformer + SAC with periodic-review curriculum learning and LiDAR clustering optimization

To address weak environment reasoning, slow convergence, and hard sim-to-real transfer in goal-directed autonomous robot exploration, it proposes CTSAC: it embeds a Transformer into the SAC perception network to use history information and improve foresight, designs a periodic-review curriculum learning scheme to mitigate catastrophic forgetting and accelerate training, and optimizes LiDAR clustering to narrow the sim-to-real gap. ROS-Gazebo simulation and real-robot experiments show higher exploration success rate and efficiency than traditional non-learning methods and mainstream learning-based algorithms.

To address weak environment reasoning, slow convergence, and hard sim-to-real transfer in goal-directed autonomous robot exploration, it proposes CTSAC: it embeds a Transformer into the SAC perception network to use history information and improve foresight, designs a periodic-review curriculum learning scheme to mitigate catastrophic forgetting and accelerate training, and optimizes LiDAR clustering to narrow the sim-to-real gap. ROS-Gazebo simulation and real-robot experiments show higher exploration success rate and efficiency than traditional non-learning methods and mainstream learning-based algorithms.

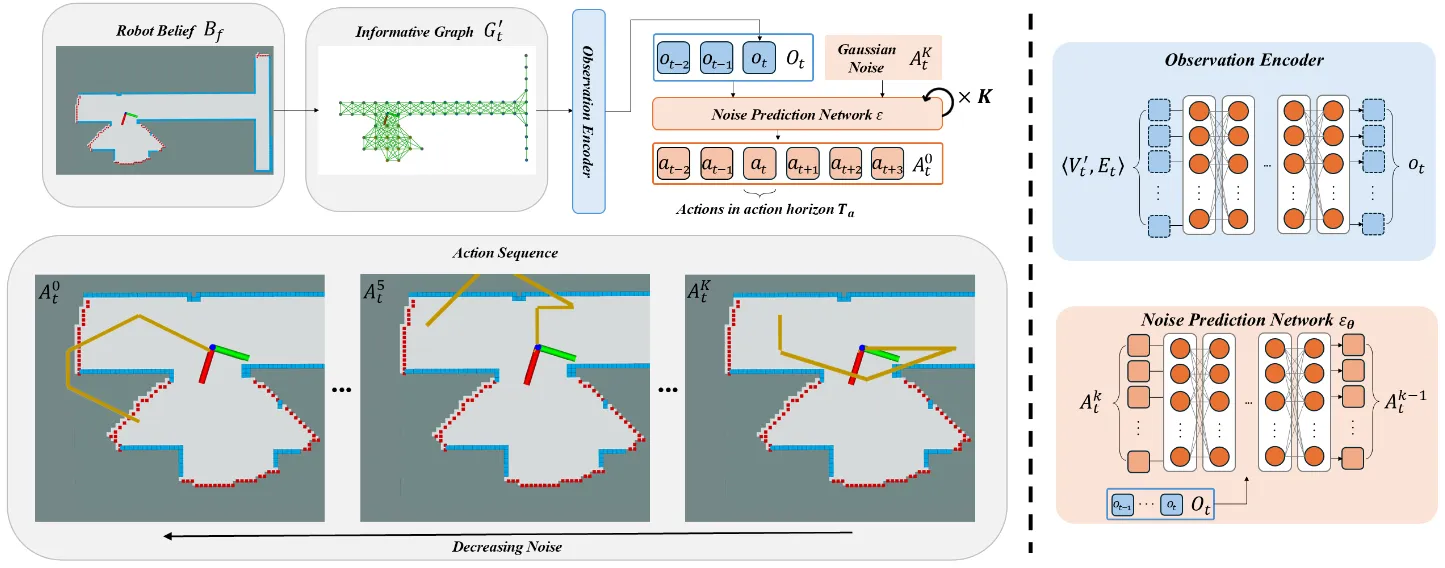

DARE#

Diffusion policy + attention map encoder to generate exploration paths from optimal expert demonstrations

It proposes DARE, a generative method for autonomous robot exploration. It uses an attention encoder to extract environment-map features, and a diffusion-policy network to learn exploration patterns from optimal expert demonstrations. It can infer the structure of unknown regions based on local environment beliefs and generate explicit long-horizon planned paths. Simulation and real-world deployment show exploration efficiency comparable to mainstream traditional and learning-based planners, with strong generalization and sim-to-real transfer.

It proposes DARE, a generative method for autonomous robot exploration. It uses an attention encoder to extract environment-map features, and a diffusion-policy network to learn exploration patterns from optimal expert demonstrations. It can infer the structure of unknown regions based on local environment beliefs and generate explicit long-horizon planned paths. Simulation and real-world deployment show exploration efficiency comparable to mainstream traditional and learning-based planners, with strong generalization and sim-to-real transfer.